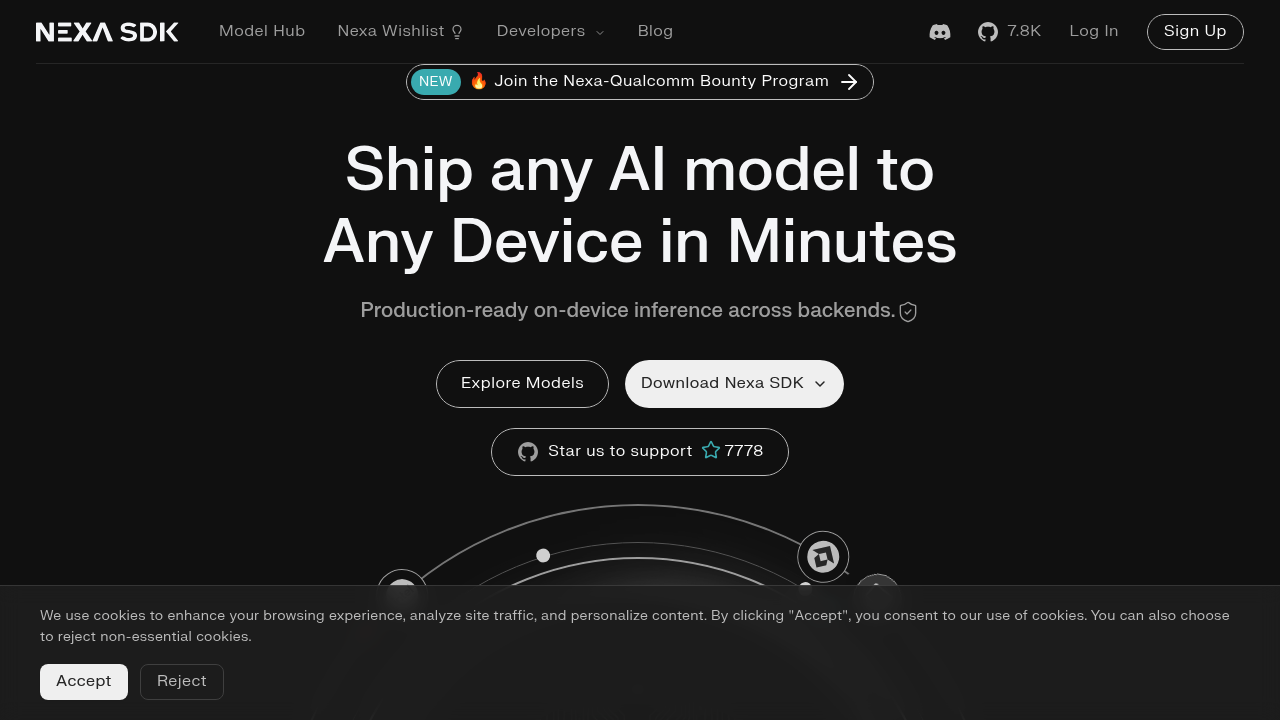

Nexa SDK

The SDK handles on-device AI inference by routing model execution through three distinct hardware backends

The SDK handles on-device AI inference by routing model execution through three distinct hardware backends. When you initialize a model, it automatically detects available computational resources and assigns workloads to NPU, GPU, or CPU based on hardware capabilities and model requirements. This abstraction layer means the same code runs across different platforms without modification.

At a Glance

Reviews (0)

Log in to write a review

No reviews yet. Be the first to review Nexa SDK!

🔗 Similar AI Tools

Discover more tools in this category

Claude

Teams wanting AI that doesn't compromise on privacy find Claude refreshing

ChatGPT

ChatGPT answers almost anything

Gemini

Google's latest AI assistant handles code debugging

DeepSeek

DeepSeek doesn't reveal specific API pricing details upfront

Grok

Grok's output quality hits different than most AI assistants

Dify

Agentic workflows separate Dify from other no-code builders