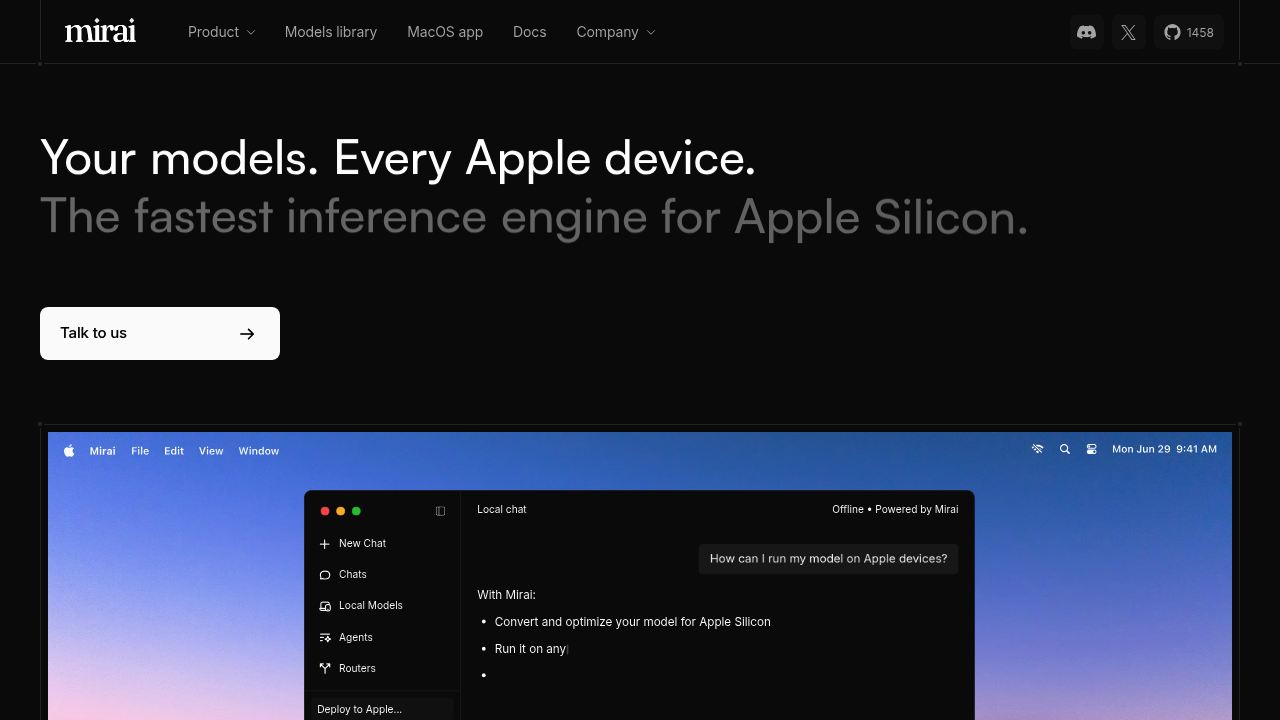

Mirai

Developers building AI applications for Apple devices face a persistent challenge: running sophisticated models locally without sacrificing speed or draining device resources

Developers building AI applications for Apple devices face a persistent challenge: running sophisticated models locally without sacrificing speed or draining device resources. Mirai tackles this with an inference engine built specifically for Apple Silicon, converting and optimizing models from Hugging Face with a single line of code. The system taps directly into the Neural Engine available across Mac, iPhone, and iPad hardware, utilizing unified memory bandwidth that reaches 273 GB/s on M4 chips.

At a Glance

Reviews (0)

Log in to write a review

No reviews yet. Be the first to review Mirai!

🔗 Similar AI Tools

Discover more tools in this category

Claude

Teams wanting AI that doesn't compromise on privacy find Claude refreshing

ChatGPT

ChatGPT answers almost anything

Gemini

Google's latest AI assistant handles code debugging

DeepSeek

DeepSeek doesn't reveal specific API pricing details upfront

Grok

Grok's output quality hits different than most AI assistants

Dify

Agentic workflows separate Dify from other no-code builders