The data pipeline works through a credit-based execution system. Each generation request consumes credits from your monthly allocation, with different models requiring different credit amounts based on their computational requirements. The service doesn't train its own models. It functions as an orchestration layer that manages API calls to third-party model providers.

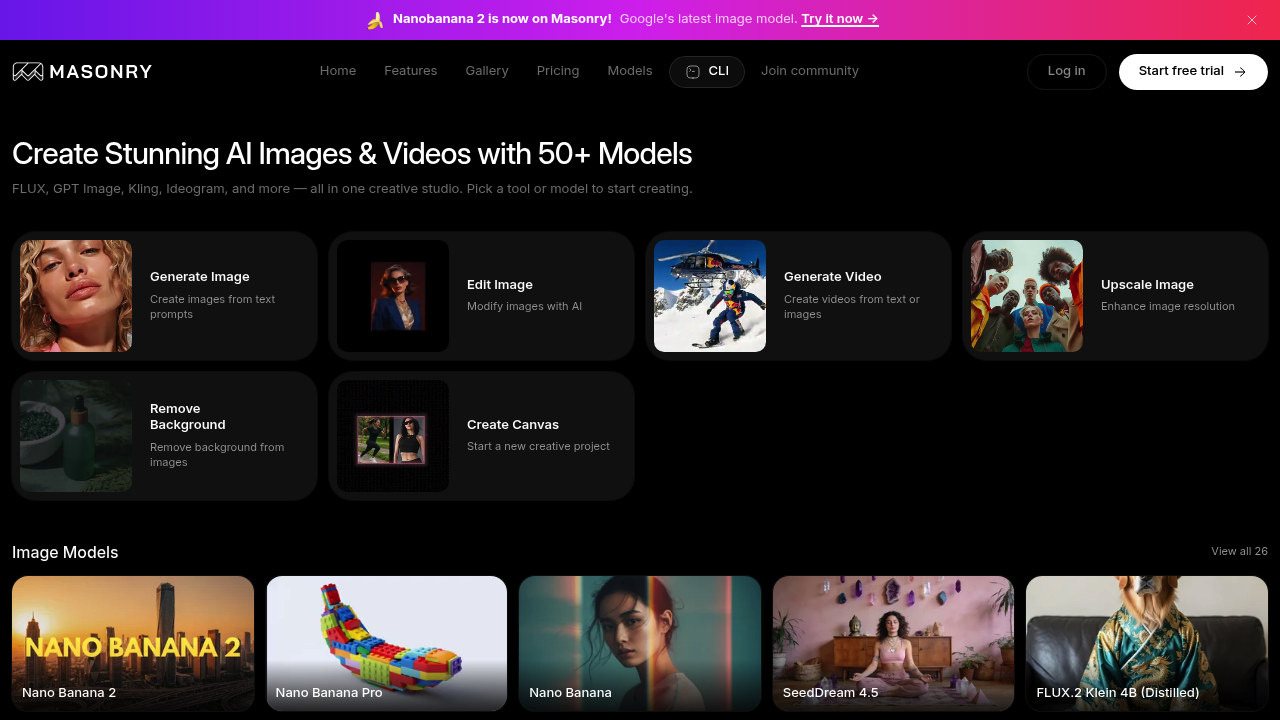

For video generation, the service accepts either text prompts or static images as inputs. The image-to-video transformation pipeline analyzes the input frame and extends it temporally using motion prediction algorithms from the selected video model. Scene-level controls let you specify camera movement, motion intensity, and environmental parameters before the generation runs. Character consistency across multiple frames uses reference image anchoring, where the system passes the same character reference to subsequent generation requests to maintain visual coherence.

The upscaling pipeline runs as a separate post-processing step. After initial generation, you can route images through enhancement models that reconstruct them at up to 4K resolution using super-resolution neural networks. Background removal uses segmentation models that classify pixels as foreground or background, then extract the subject with edge refinement.

The storyboarding feature organizes multiple generations into sequence boards. This doesn't create automatic scene transitions. It's a layout tool for arranging and reviewing multiple outputs in narrative order. Smart remixing takes an existing output and uses it as a conditioning input for new generations, allowing iterative refinement without starting from scratch.

Export functionality renders final outputs in three aspect ratios: 16:9 for horizontal video, 1:1 for square social posts, and 9:16 for vertical mobile formats. The service handles the cropping and reframing internally based on the original generation's composition.

Model comparison runs generations in parallel rather than sequentially. When you select multiple models, Masonry sends identical prompts to each simultaneously and aggregates results once all API responses return. This lets you evaluate how Kling, Runway, and Pika interpret the same prompt without running separate sessions.

The service offers a three-day trial period with full model access. After that, the Starter plan runs $10 monthly for 1,000 credits, while Pro costs $30 monthly for 5,000 credits. Yearly subscriptions get a 20% discount. Neither plan includes watermarks, and both provide priority support and access to all available models.

Technical limitations center on the credit system. Credits deplete with each generation, and monthly allocations don't roll over. When you run out, you either purchase additional credits or wait for the next billing cycle. The service can't bypass rate limits imposed by upstream model providers, so during high-demand periods, some models may queue requests or return slower response times.

The system provides API access for programmatic generation, letting developers integrate Masonry's model aggregation into their own applications. Team features support collaborative workspaces where multiple users can share boards and generation history. No mobile app exists. Browser extension isn't available. The service runs entirely through its web interface.