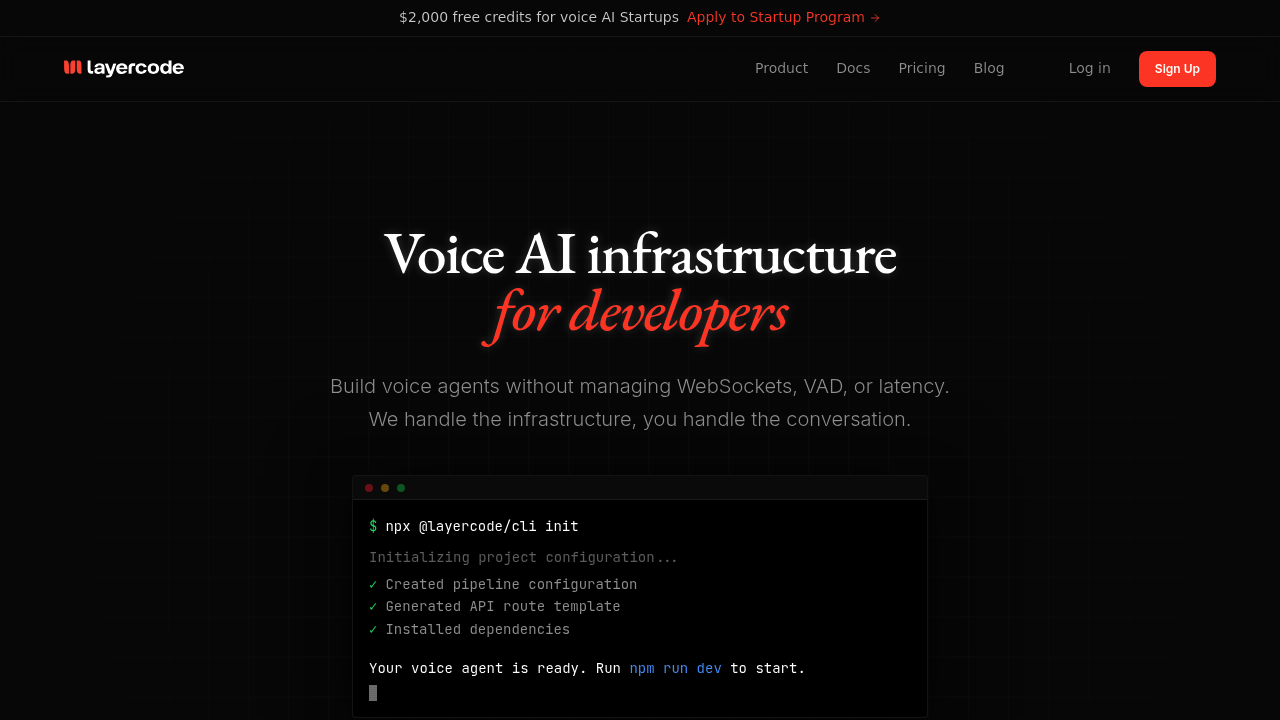

Layercode handles the voice infrastructure layer so developers control conversation logic through webhooks. This system processes audio streaming at the nearest edge location using Cloudflare infrastructure, runs speech-to-text in real-time, and synthesizes responses back to users. Developers write conversation handling code while Layercode manages WebSocket connections, voice activity detection, and model coordination.

The Node.js SDK integrates with Next.js projects in under 50 lines of code. A customer service startup built their voice agent by connecting the SDK to their existing Vercel AI SDK setup, letting them switch between Deepgram and other speech providers without rewriting infrastructure. The webhook architecture means conversation logic lives in familiar TypeScript functions rather than custom streaming protocols.

Per-second billing charges only for active speech. Silence costs nothing. Platform fees run four cents per minute for web and mobile applications, with speech-to-text from Deepgram Nova at 0.92 cents per minute and text-to-speech options ranging from half a cent per minute for Inworld to 2.4 cents for ElevenLabs. Session recordings capture every interaction for debugging and compliance.

An e-learning company building pronunciation tutoring agents can hot-swap voice models during development without infrastructure changes. They tested Cartesia Sonic Turbo against Rime Mist while keeping the same conversation code. The observability tooling showed exactly where latency spikes occurred during peak usage hours.

The system breaks down for teams needing more than 10 concurrent voice sessions without paying. The Developer plan caps at 10 sessions with seven-day data retention and community support only. A call center prototype handling 30 simultaneous calls hits this wall immediately.

Custom voice cloning demands the Pro plan at 250 dollars monthly, which provides 50 concurrent sessions, 30-day retention, priority support, webhook signatures, and team collaboration. A meditation app cloning their instructor's voice needs this tier just for the cloning feature. Enterprise plans add unlimited sessions, HIPAA compliance, SLA guarantees, and on-premise deployment for healthcare and financial services with strict data requirements.

The startup program offers 2,000 dollars in free credits specifically for voice AI companies. Standard Developer accounts get 100 dollars to start. A voice assistant startup testing market fit burns through free credits during user research before committing to paid infrastructure.

Developers wanting visual conversation builders won't find them here. This isn't a no-code platform. It requires writing webhook handlers and understanding audio streaming concepts. A marketing team wanting to launch voice agents without engineering support needs different tools entirely.

This service works best for TypeScript developers building production voice applications who want infrastructure handled but conversation logic controlled. Integration with LangChain, OpenAI, Anthropic, and Ollama means existing AI chains connect directly. A real estate chatbot team added voice to their text agent by routing audio through Layercode while keeping their conversation engine unchanged.

Skip this if you're building simple voice demos, need visual workflow editors, or want all-in-one voice platforms. The webhook model demands comfort with async processing and API integration patterns.