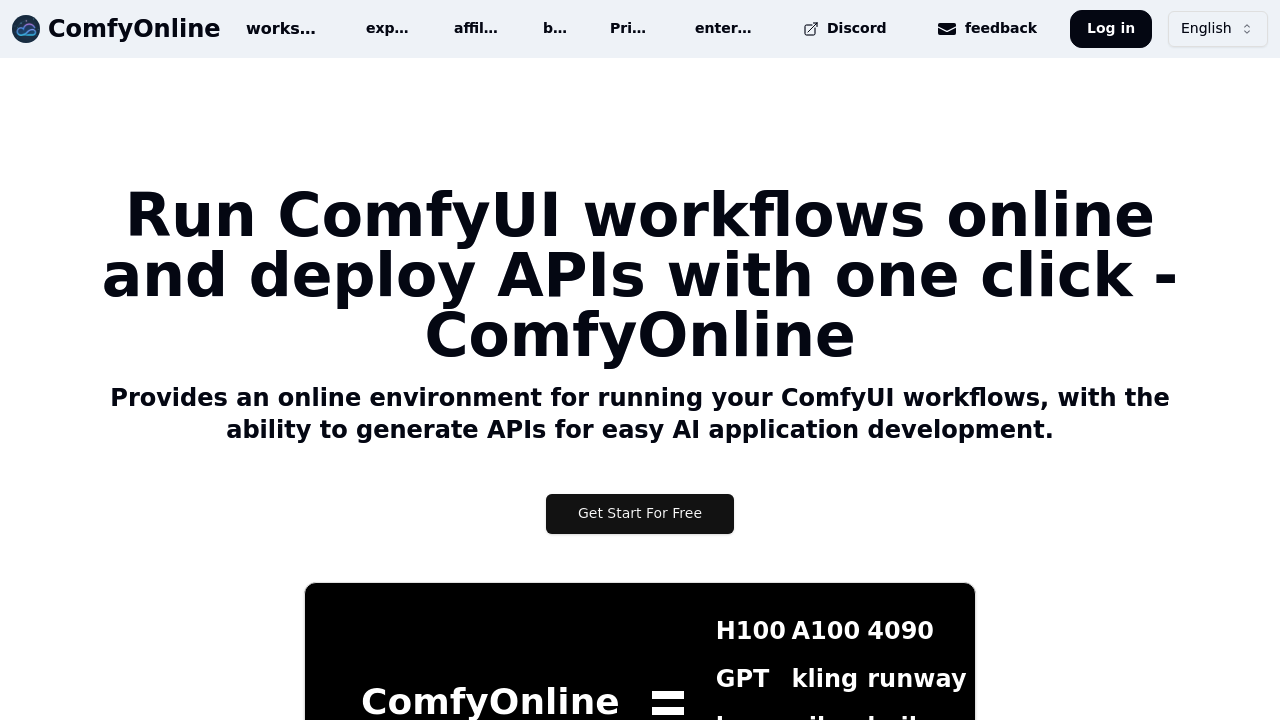

You build your workflow in the familiar ComfyUI interface. Connect nodes, configure parameters, run generations. The difference is everything happens online. When you're done editing and want to use this workflow in an app, you click to deploy it as an API. The service generates the endpoint automatically.

The API becomes callable from any application. You send requests, the workflow runs on whatever GPU type you selected, results come back. Choose from H100, A100, or 4090 hardware depending on speed requirements. The system scales when traffic increases without manual intervention.

You're charged only when workflows actively run. Not while editing. Not while idle. This matters because traditional GPU rentals bill constantly, even when you're just tweaking nodes or testing configurations. Here you pay for execution time alone.

The service connects to external services through pre-installed nodes. Video generation through Kling, Hailuo, Runway, Luma, Pika, Wan720, and Veo. Image creation via Recraft, Ideogram, and Flux Pro Ultra. Audio synthesis through ElevenLabs. Multiple large language model integrations come pre-configured. Custom nodes and extensions come pre-installed, eliminating the usual hunt for compatible versions.

Where things get tricky is understanding which models live on the service versus which require external API keys. The interface doesn't always make this clear upfront. You might start building a workflow assuming everything's included, then discover certain integrations need separate accounts. Documentation around this could be clearer.

The serverless architecture means you never manage GPU on and off states. No watching credits burn while machines idle. No racing to shut down instances. The system handles resource allocation automatically, which removes operational headaches but also removes control over exactly when resources spin up.

For developers building AI products, this shortens the path from ComfyUI prototype to production API. You test workflows locally, move them online, generate an endpoint, integrate it into your app. The entire deployment pipeline compresses to minutes instead of days spent configuring servers and managing infrastructure.

The automatic scaling handles traffic surges without configuration. Your API won't fall over when usage spikes, but you also can't cap scaling to control costs. That automatic behavior cuts both ways depending on your tolerance for variable expenses.