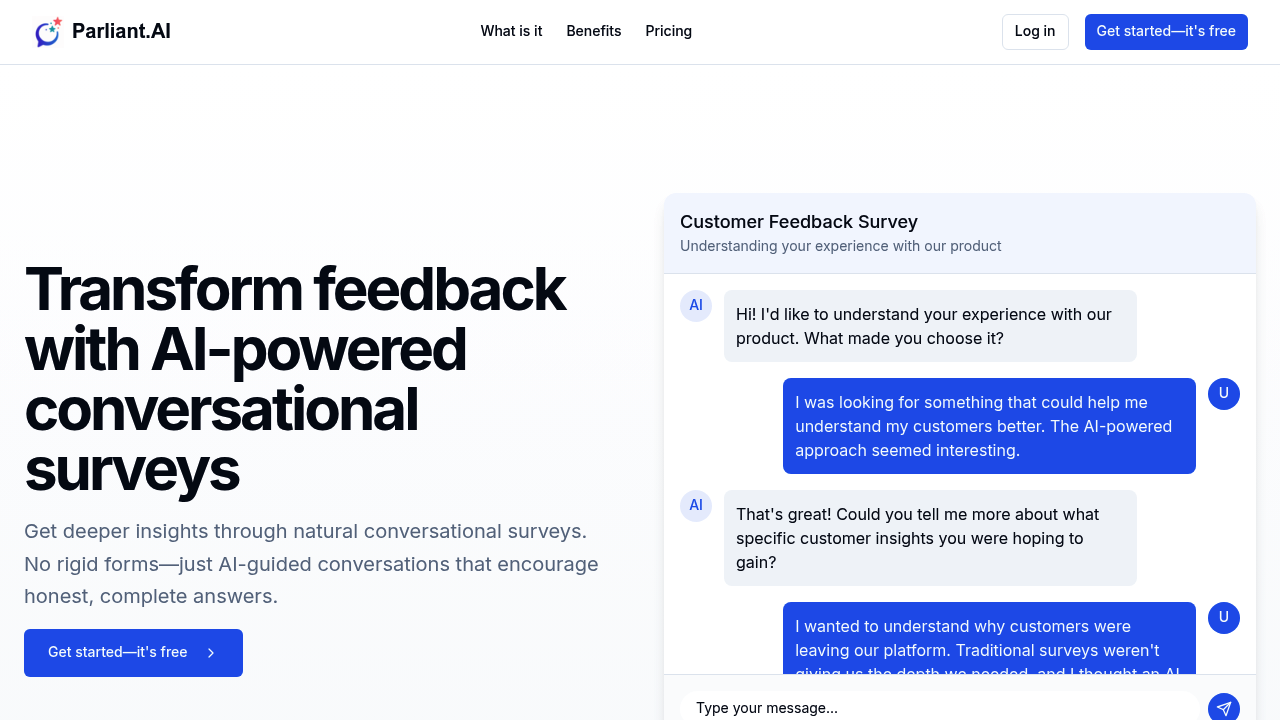

Parliant.AI replaces rigid survey forms with AI-powered conversations that adapt in real-time. Instead of asking predetermined questions in fixed order, it responds to what people actually say. A user mentions they're confused by navigation, and the AI immediately asks which specific parts cause problems. Another user says they love the new dashboard, and the AI digs into what makes it work for them. The conversations feel natural because the AI writes intelligent follow-up questions based on each response.

You describe what insights you need in plain language, and the AI builds the entire conversation flow. No scripting required. Respondents can type their answers or speak them aloud, whichever feels easier. Parliant.AI automatically categorizes responses and identifies recurring themes across hundreds of conversations. Instead of reading through individual answers manually, you get extracted insights showing patterns in what customers actually care about.

The system evaluates how insightful each response is and can prompt users to elaborate when answers are too vague. Someone writes "it's fine" and the AI recognizes that's not useful feedback, asking them to explain what specifically works or doesn't work. This pushes past surface-level reactions into actual reasoning.

A UX researcher testing a new checkout flow gets detailed explanations of where users hesitate and why. An HR director gathering employee feedback about remote work policies hears concerns that wouldn't surface in multiple choice questions. A nonprofit director understanding donor motivations discovers reasons people give that weren't on her radar.

Parliant.AI works best for qualitative research where understanding the "why" matters more than counting responses. It doesn't replace quantitative surveys tracking metrics over time. You won't get statistical significance with 100 responses per month on the free plan. If you need thousands of responses for market research or demographic analysis, you'll hit limits quickly.

The free plan caps at 3 surveys and 100 responses monthly, enough to test the approach but not run ongoing feedback programs. Pro at $49 monthly includes unlimited surveys and 1,000 responses, suitable for small teams doing regular customer research. Custom branding only appears in Enterprise pricing, so free and Pro users send surveys with Parliant.AI visible.

There aren't integrations listed. You can't automatically push survey results into your CRM or analytics tools. You're working within Parliant.AI itself.

Skip this if you need simple yes/no data collection or want to survey large audiences cheaply. Also wrong if you're collecting structured data for reports where you need identical questions asked identically every time. Traditional tools handle that better.

Works when you're stuck interpreting why customers behave certain ways and multiple choice questions keep missing the real answer. When you're reading survey results thinking "but I still don't understand what they mean." When you'd interview people one-on-one if you had time but don't. It automates that conversational depth.