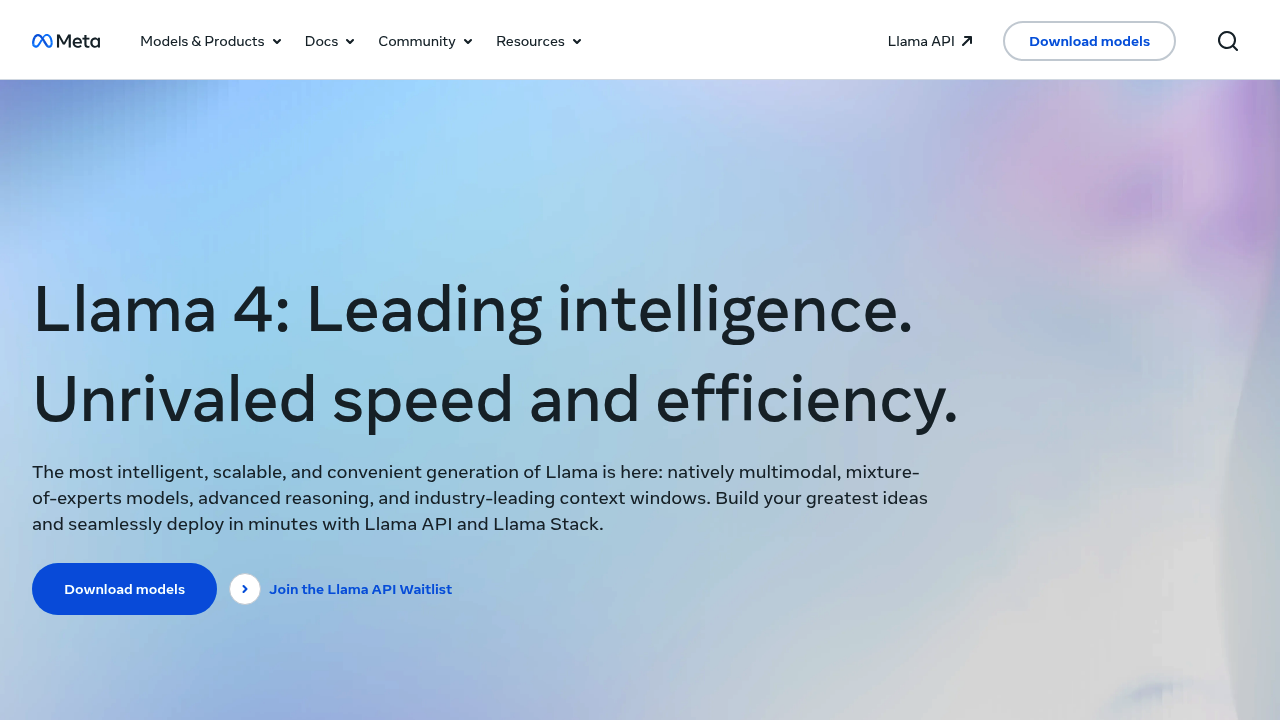

Llama

Open AI foundation

Meta's Llama fuses text and vision data during pre-training. Not bolted together afterward. This early fusion creates genuine multimodal intelligence instead of typical patchwork solutions.

Reviews (0)

Log in to write a review

No reviews yet. Be the first to review Llama!

🔗 Similar AI Tools

Discover more tools in this category

1

2

2

3

3

4

4

5

5

6

6

Claude

Jan 5

Teams wanting AI that doesn't compromise on privacy find Claude refreshing

ChatGPT

Jan 5

ChatGPT answers almost anything

Gemini

Jan 5

Google's latest AI assistant handles code debugging

DeepSeek

Jan 11

DeepSeek doesn't reveal specific API pricing details upfront

Nectar AI

Jan 16

Daily generation limits and monthly messaging caps restrict how much you can interact with your virtual companions — even on paid plans

Grok

Jan 11

Grok's output quality hits different than most AI assistants

No reviews yet

Write Review

×

![Screenshot]()