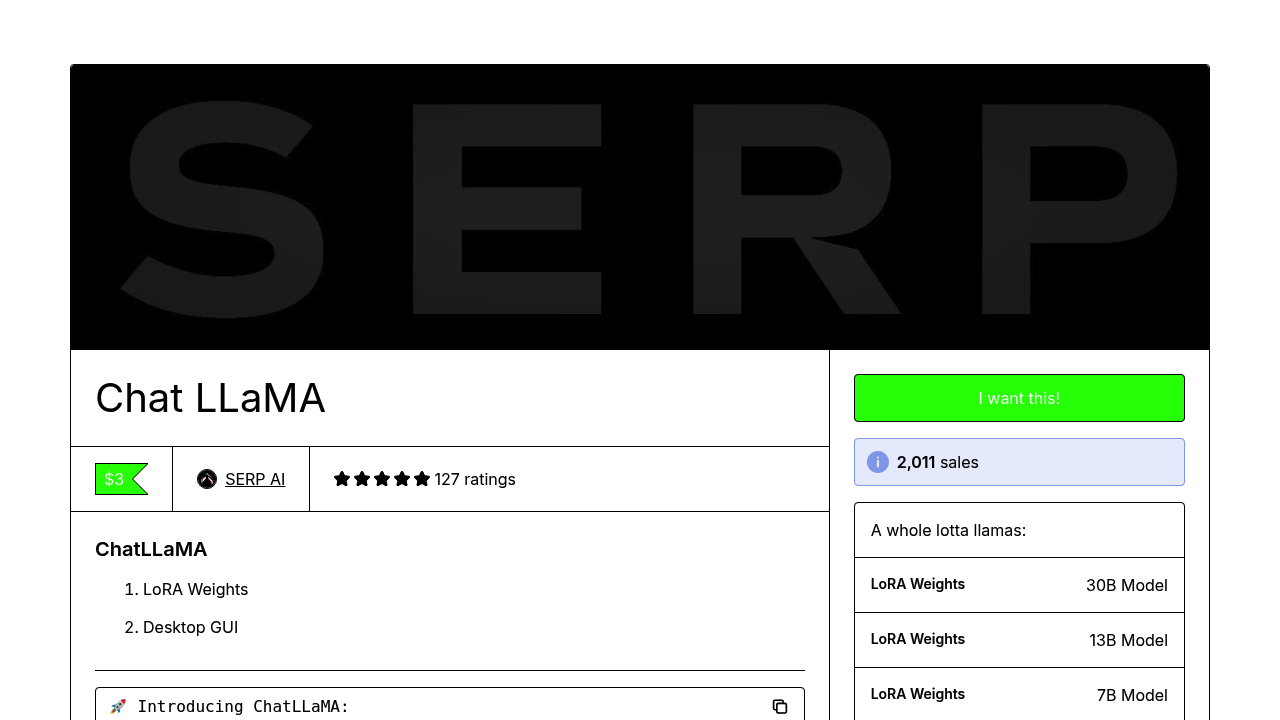

Run your own personal AI assistant on your hardware—no cloud APIs required. ChatLLaMA gives you LoRA weights trained on Anthropic's HH dataset. Build conversational AI that works on consumer GPUs. Three model sizes match whatever hardware you've got. The 7B model runs on modest setups. More power? Grab the 13B or 30B versions. Each comes in standard and extended 2048 sequence length variants. A desktop GUI handles local deployment. No wrestling with command-line tools. Here's the catch—these aren't foundation model weights. You're getting LoRA adapters that work with existing LLaMA base models. Training focused on modeling natural back-and-forth between users and AI assistants. Over 2000 people grabbed these weights already (4.8 rating across 127 reviews). This suits researchers and developers building custom chatbot experiences. Prototyping a domain-specific assistant for legal research? Want to experiment with conversational AI without monthly API bills eating your budget? The open source approach gives you control over data and deployment. The team built this primarily for research purposes. They're actively seeking developers to contribute code in exchange for GPU resources. An RLHF version is supposedly coming—hasn't materialized yet. You'll need to source your own base LLaMA weights separately since only the LoRA adapters come included here. For anyone wanting local AI assistants without vendor lock-in, it's a solid starting point.

ChatLLaMA

Run your own personal AI assistant on your hardware—no cloud APIs required

56 views

Frequently asked

Do I need to pay for ChatLLaMA?

Nope, ChatLLaMA is completely free. You're downloading LoRA weights that run on your own hardware, so there aren't any subscription fees or API costs to worry about.

What GPU do I need to run ChatLLaMA?

The 7B model works on modest consumer GPUs, which is perfect if you're not running a high-end setup. If you've got more powerful hardware, the 13B and 30B models will give you better performance.

Does ChatLLaMA include the full LLaMA model?

No, it only includes LoRA adapter weights—you'll need to get the base LLaMA model weights separately. Think of it like getting an upgrade kit rather than the whole car.

Can I use ChatLLaMA without coding experience?

There's a desktop GUI included that handles local deployment, so you won't need to mess with command-line tools. That said, it's really built for developers and researchers who want to customize and experiment.

What can I actually build with ChatLLaMA?

It's great for prototyping custom chatbots and domain-specific assistants—like a legal research helper or customer support bot. Since it runs locally, you control your data and don't rack up monthly API bills.

Is ChatLLaMA ready for production use?

Not really—it's trained for research purposes and the RLHF version isn't available yet. It's better suited for experimentation and learning rather than deploying customer-facing applications right now.

Reviews (0)

No reviews yet. Be the first to share your experience.