Triall

Tired of confidently wrong AI answers ruining your work

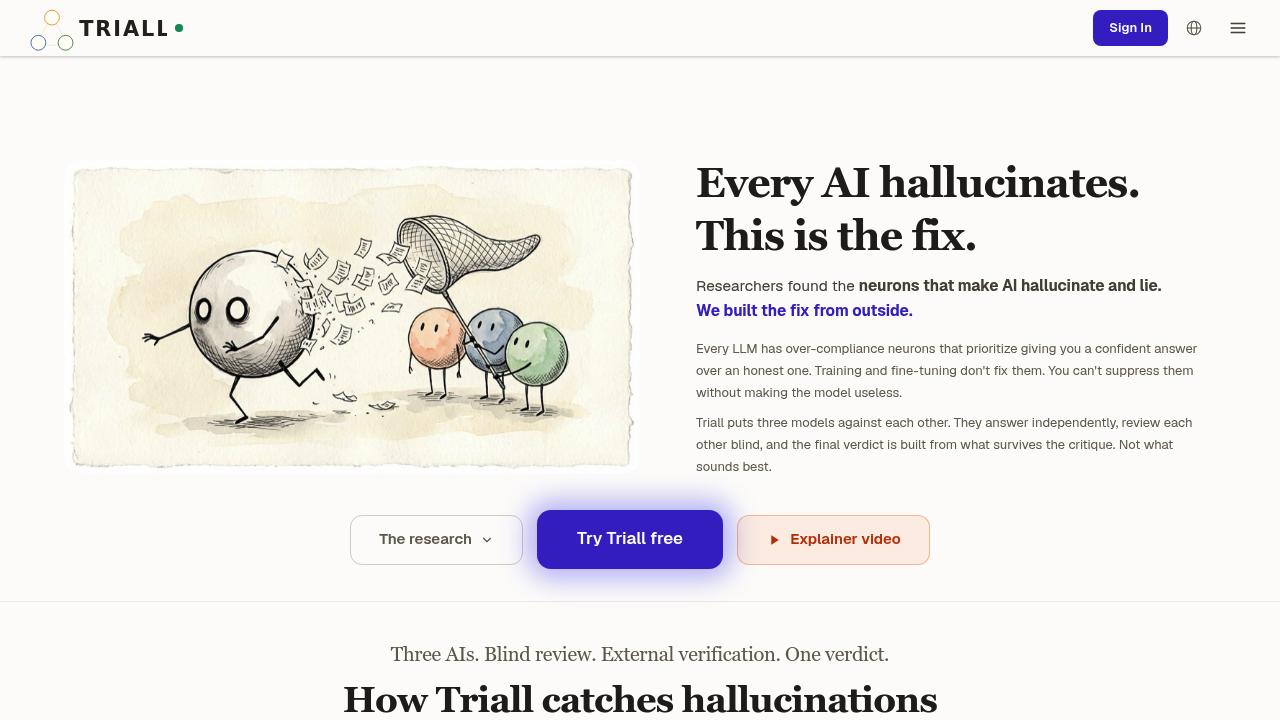

Tired of confidently wrong AI answers ruining your work? Triall does something different.

Reviews (0)

Log in to write a review

No reviews yet. Be the first to review Triall!

🔗 Similar AI Tools

Discover more tools in this category

1

2

2

3

3

4

4

5

5

6

6

ZeroGPT

Feb 3

ZeroGPT processes text quickly to detect AI-generated content

Originality.AI

Feb 3

Developers get API access for building AI detection into their own apps

ZeroGPT Plus

Feb 3

ZeroGPT Plus can detect if text came from AI with high accuracy

Human Tone

Jan 24

AI-generated content often sounds like a robot wrote it

The Profanity API

Feb 8

The Profanity API handles real-time content moderation at scale

Sinaptic.AI

Jan 17

AI tools don't know when you're about to paste your social security number into them

No reviews yet

Write Review

×

![Screenshot]()