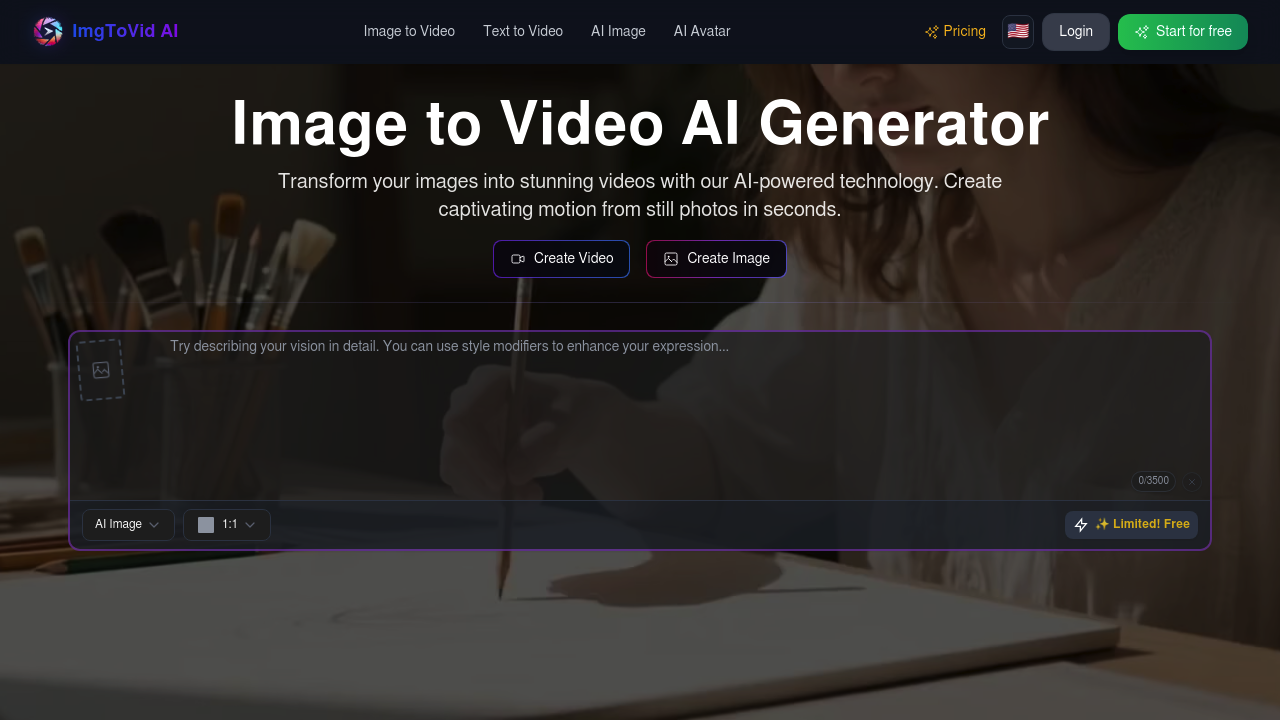

ImgToVid AI

This service processes static images through neural networks that analyze spatial features and generate interpolated frames to create motion sequences

This service processes static images through neural networks that analyze spatial features and generate interpolated frames to create motion sequences. Users upload JPG, PNG, or WEBP files, then provide text prompts that guide how the algorithm interprets and animates the content. The system works with multiple input methods including direct image uploads for animation and text-to-video generation that creates moving content from descriptions alone.

At a Glance

Pricing Plans

- Limited features

- 0/3500 limit

- Up to 360 videos or 7200 images

- 36000 credits per year

- Prompt Generator free

- Access all AI models

- Unlimited Use Of Video

- Video History

- Commercial License

- Credits roll-over & never expire

- Up to 660 videos or 13596 images

- 66000 credits per year

- Prompt Generator free

- Access all AI models

- Unlimited Use Of Video

- Video History

- Commercial License

- No ads

- Credits roll-over & never expire

- Up to 1560 videos or 31992 images

- 156000 credits per year

- Prompt Generator free

- Access all AI models

- Unlimited Use Of Video

- Video History

- Commercial License

- No ads

- Credits roll-over & never expire

Reviews (0)

Log in to write a review

No reviews yet. Be the first to review ImgToVid AI!

🔗 Similar AI Tools

Discover more tools in this category

Gemini

Google's latest AI assistant handles code debugging

Nectar AI

Daily generation limits and monthly messaging caps restrict how much you can interact with your virtual companions — even on paid plans

Voor AI

You sign in and type a description

Shoots by Diktatorial

Musicians upload selfies to Shoots by Diktatorial

SoVideo

SoVideo cranks out videos in 1-3 minutes per clip

Canva AI

Canva AI cranks out social media graphics in under five minutes