HeyVid AI

You type what you want to see

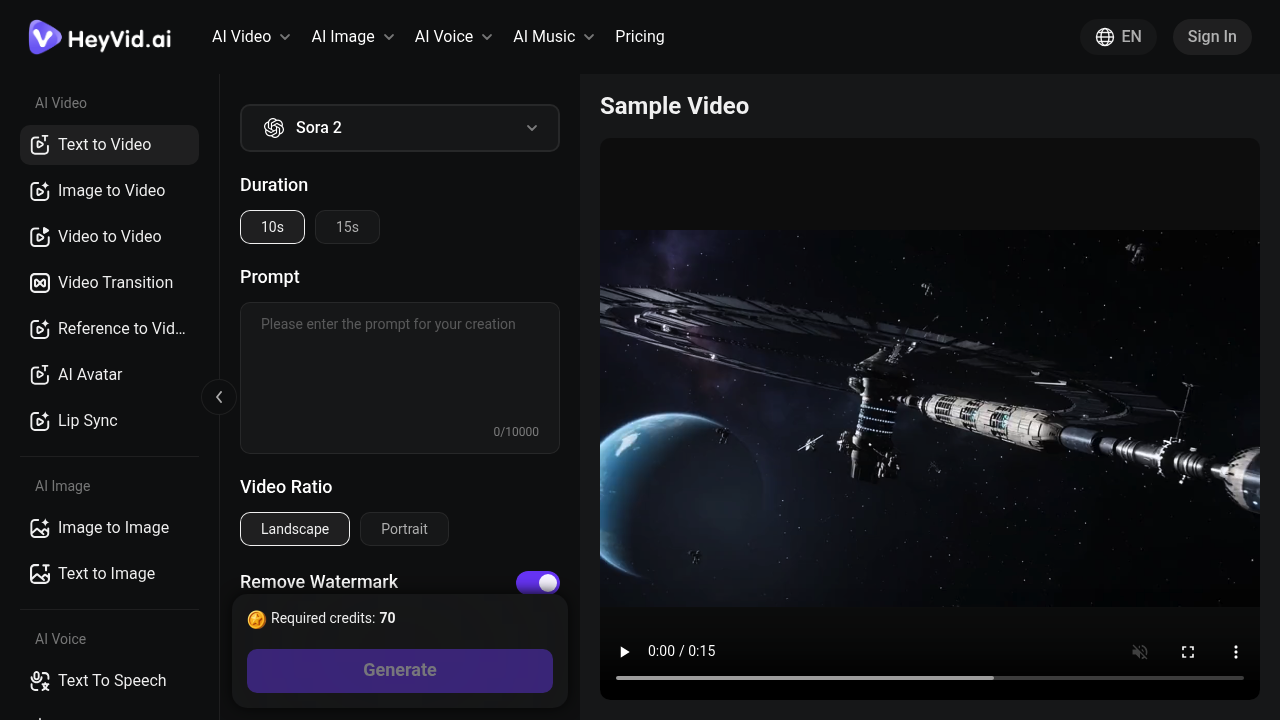

You type what you want to see. HeyVid AI generates a video using whichever AI model you pick—there are a dozen options. It pulls together Sora, Kling, Runway, Veo and others. You're not stuck with just one engine's interpretation of your prompt.

Reviews (0)

Log in to write a review

No reviews yet. Be the first to review HeyVid AI!

🔗 Similar AI Tools

Discover more tools in this category

1

2

2

3

3

4

4

5

5

6

6

LyricsToSongAI

Jan 25

You'll have a complete song with vocals and instruments ready in 30 seconds

MSong.ai

Jan 24

You're stuck on a bus with just your phone and a song idea that won't leave you alone

Suno

Jan 5

Type in a prompt

Vozart

Jan 25

Log in and grab free credits to test Vozart's text-to-music generation

MusicCreator AI

Jan 25

Upload a sunset photo and get a song

MuseGen

Jan 17

Ten credits per song generation adds up fast

No reviews yet

Write Review

×

![Screenshot]()